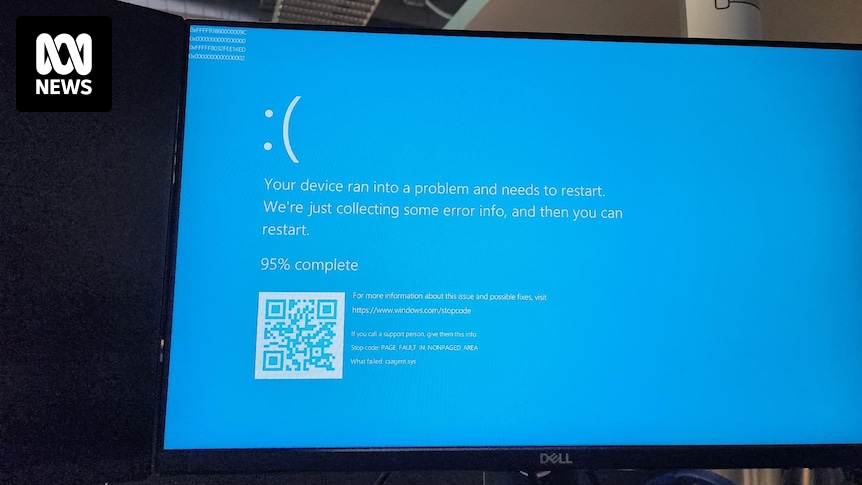

All our servers and company laptops went down at pretty much the same time. Laptops have been bootlooping to blue screen of death. It’s all very exciting, personally, as someone not responsible for fixing it.

Apparently caused by a bad CrowdStrike update.

Edit: now being told we (who almost all generally work from home) need to come into the office Monday as they can only apply the fix in-person. We’ll see if that changes over the weekend…

Reading into the updates some more… I’m starting to think this might just destroy CloudStrike as a company altogether. Between the mountain of lawsuits almost certainly incoming and the total destruction of any public trust in the company, I don’t see how they survive this. Just absolutely catastrophic on all fronts.

If all the computers stuck in boot loop can’t be recovered… yeah, that’s a lot of cost for a lot of businesses. Add to that all the immediate impact of missed flights and who knows what happening at the hospitals. Nightmare scenario if you’re responsible for it.

This sort of thing is exactly why you push updates to groups in stages, not to everything all at once.

Looks like the laptops are able to be recovered with a bit of finagling, so fortunately they haven’t bricked everything.

And yeah staged updates or even just… some testing? Not sure how this one slipped through.

Agreed, this will probably kill them over the next few years unless they can really magic up something.

They probably don’t get sued - their contracts will have indemnity clauses against exactly this kind of thing, so unless they seriously misrepresented what their product does, this probably isn’t a contract breach.

If you are running crowdstrike, it’s probably because you have some regulatory obligations and an auditor to appease - you aren’t going to be able to just turn it off overnight, but I’m sure there are going to be some pretty awkward meetings when it comes to contract renewals in the next year, and I can’t imagine them seeing much growth

Nah. This has happened with every major corporate antivirus product. Multiple times. And the top IT people advising on purchasing decisions know this.

Yep. This is just uninformed people thinking this doesn’t happen. It’s been happening since av was born. It’s not new and this will not kill CS they’re still king.

At my old shop we still had people giving money to checkpoint and splunk, despite numerous problems and a huge cost, because they had favourites.

Don’t most indemnity clauses have exceptions for gross negligence? Pushing out an update this destructive without it getting caught by any quality control checks sure seems grossly negligent.

deleted by creator

Can’t; the project manager ate all the crayons

Why is it bad to do on a Friday? Based on your last paragraph, I would have thought Friday is probably the best week day to do it.

Most companies, mine included, try to roll out updates during the middle or start of a week. That way if there are issues the full team is available to address them.

deleted by creator

And hence the term read-only Friday.

Or someone selected “env2” instead of “env1” (#cattleNotPets names) and tested in prod by mistake.

Look, it’s a gaffe and someone’s fired. But it doesn’t mean fuck ups are endemic.

Was it not possible for MS to design their safe mode to still “work” when Bitlocker was enabled? Seems strange.

I’m not sure what you’d expect to be able to do in a safe mode with no disk access.

I think you’re on the nose, here. I laughed at the headline, but the more I read the more I see how fucked they are. Airlines. Industrial plants. Fucking governments. This one is big in a way that will likely get used as a case study.

The London Stock Exchange went down. They’re fukd.

Testing in production will do that

Not everyone is fortunate enough to have a seperate testing environment, you know? Manglement has to cut cost somewhere.

Manglement is the good term lmao

Don’t we blame MS at least as much? How does MS let an update like this push through their Windows Update system? How does an application update make the whole OS unable to boot? Blue screens on Windows have been around for decades, why don’t we have a better recovery system?

Crowdstrike runs at ring 0, effectively as part of the kernel. Like a device driver. There are no safeguards at that level. Extreme testing and diligence is required, because these are the consequences for getting it wrong. This is entirely on crowdstrike.

What lawsuits do you think are going to happen?

They can have all the clauses they like but pulling something like this off requires a certain amount of gross negligence that they can almost certainly be held liable for.

Whatever you say my man. It’s not like they go through very specific SLA conversations and negotiations to cover this or anything like that.

I forgot that only people you have agreements with can sue you. This is why Boeing hasn’t been sued once recently for their own criminal negligence.

👌👍

😔💦🦅🥰🥳

Forget lawsuits, they’re going to be in front of congress for this one

For what? At best it would be a hearing on the challenges of national security with industry.